Search "in app feedback tools" and Google returns five different categories of software as if they were the same thing: micro-surveys, visual feedback and bug reporting, in-app messaging, analytics with feedback bolted on, and mobile SDKs. Most top-10 listicles mix them without explaining which frame fits which problem, so readers pick by reputation or price and end up with the wrong tool.

After two years building the Feeqd widget and watching customers set up in-app feedback programs, I have seen the same failure repeat. Teams buy Pendo for a 10-person startup and use 8% of it. Teams pick Hotjar for a bug they cannot reproduce and ignore the visual replay strength. Teams add Survicate and then wonder why they still cannot track feature requests.

This post fixes that. It defines the 5 frames of in-app feedback, lists the real tools inside each frame, and gives you a four-question decision framework. Frame first, tool second.

The 5 Frames of In-App Feedback

Google treats these as interchangeable, but they solve different problems with different signal shapes. Pick the frame that matches the decision you need to make.

| Frame | Signal type | Best for | Example tools |

|---|---|---|---|

| A. Micro-surveys | Quantitative targeted (NPS, CSAT, CES) | Catching drift at specific moments | Survicate, Qualaroo, Sprig |

| B. Visual feedback / bug reporting | Screenshots + behavior recordings | Diagnosing UI issues you cannot reproduce | Hotjar, Userback, Instabug |

| C. In-app messaging + feedback | Conversations | Support-led product feedback | Intercom, Zendesk Messaging |

| D. Product analytics + feedback | Usage plus sentiment | Correlating behavior with opinion | Pendo, Amplitude, Aha! |

| E. Feature requests + voting | Aggregated demand | Prioritizing roadmap | Feeqd, Featurebase, Canny |

Most teams need more than one frame over time. The question for tool selection is which frame is your primary right now. Start there, add others when the pain forces you to.

Quick Comparison Table

| Tool | Frame | Starting price | Best for | Mobile native |

|---|---|---|---|---|

| Pendo | D | Quote-based | Analytics + feedback | Yes |

| Hotjar | B | $32/mo Plus | Visual + heatmaps | Yes (web + mobile) |

| Survicate | A | $99/mo | Lightweight surveys | Yes |

| Qualaroo | A | $80/mo | Contextual nudges | Yes |

| Sprig | A | $175/mo | Research-grade surveys | Yes |

| Userback | B | $79/mo | Visual bug reports | Web focus |

| Instabug / Luciq | B | Quote-based | Mobile SDK bug reporting | Yes (iOS/Android) |

| Intercom | C | $39/mo Essential | Messaging + surveys | Yes |

| Amplitude | D | Free tier | Behavior + surveys | Yes |

| Canny | E | $79/mo Starter | Feedback + roadmap | No native SDK |

| Featurebase | E | $59/mo | Feedback + changelog | No native SDK |

| Feeqd | E | Free, $19/mo Pro | Widget + boards + roadmap | No native SDK |

Prices are the 2026 starting tier where vendors publish them. Enterprise and mobile SDK vendors (Pendo, Instabug) are quote-based.

Tools by Frame

Frame A: Micro-surveys (contextual pop-ups)

Structured survey widgets that appear at specific moments in the product, usually NPS post-onboarding, CSAT post-interaction, or a single quick question tied to a user action.

Survicate ($99/mo) is the generalist. Strong HubSpot, Intercom, and Zapier integrations. Good if your marketing stack already lives there.

Qualaroo ($80/mo) specializes in contextual "nudges" and adds AI sentiment analysis to open-ended responses. Good if you run lots of unstructured text questions.

Sprig ($175/mo) is the research-grade option with statistical rigor for sample sizes and survey depth. Good if surveys are a primary UX research channel, not a side tool.

Frame B: Visual feedback and bug reporting

Screenshots, screen recordings, session replays, and heatmaps. Signal is visual: what the user saw, where they clicked, what broke.

Hotjar ($32/mo Plus) is the category leader. Heatmaps, session recordings, and feedback buttons in one tool. Strong both on web and mobile web.

Userback ($79/mo) is focused on the "customer records a video and annotates the screen" pattern. Good for qualitative bug reporting from users who already talk to you.

Instabug / Luciq (rebranded from Instabug, quote-based for enterprise tiers) is the mobile SDK standard. Native iOS and Android, with crash reporting, in-app surveys, and session replay all in one SDK. This is the tool I have seen recommended most often on r/ProductManagement and Quora for native mobile apps.

Frame C: In-app messaging and feedback

Combines chat, support bots, surveys, and product tours. The feedback is embedded in a messaging flow rather than a separate survey or board.

Intercom ($39/mo Essential, though real product-team usage starts higher) is the category leader. Messaging is the primary job, surveys and feedback are secondary but well-executed.

This frame is the right fit when your team structure already leans on conversations as the primary customer channel. If support and product share the same inbox, Intercom makes that inbox do double duty.

Frame D: Product analytics plus feedback

Analytics platforms that layer feedback capture on top of behavior tracking. Signal is the combination: you see what a user did AND how they felt.

Pendo (quote-based, starts in the low five figures per year for real usage) is the category leader for product analytics plus feedback in one tool. Powerful. Overkill for teams under 50.

Amplitude (free tier for up to 10M events, paid tiers quote-based) is the alternative with a stronger analytics engine. Surveys are newer and less mature than Pendo's.

Aha! (product-team focused, paid plans starting around $59/user/month) adds roadmap and ideation to the analytics + feedback mix.

Frame E: Feature requests plus voting (often overlooked in in-app listicles)

This is the frame that most in-app feedback listicles miss, because they equate "in-app" with "survey inside the app." But feature request widgets are also in-app, and they aggregate a different signal: what users want you to build next.

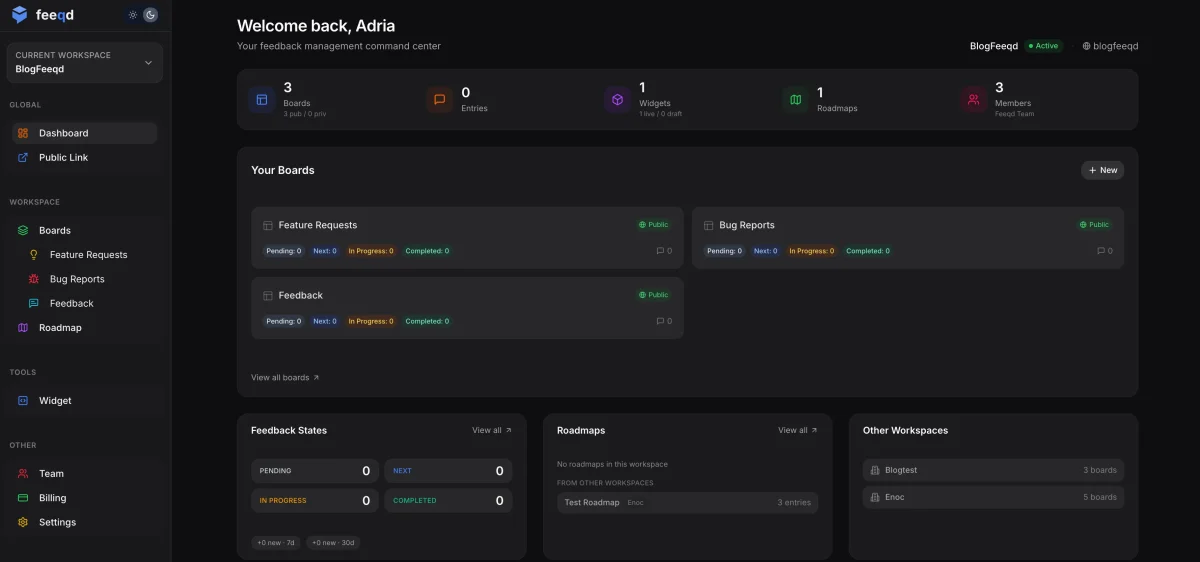

Feeqd (Free plan with one board and unlimited feedback, Pro $19/month) uses an 18KB Preact widget that loads before users notice it is there. Bundles widget, voting boards, and public roadmap in one plan. Best for product teams of 1 to 50 running continuous feedback loops.

Canny ($79/mo Starter) is the category leader for polished feedback UI. Stronger brand recognition than most alternatives. See our Canny alternatives comparison for where competitors beat it.

Featurebase ($59/mo) bundles feedback plus roadmap plus changelog. Good middle ground.

Decision Framework: Which Frame Fits Your Team?

Four questions, in order. Stop at the first yes.

- Do users struggle with visible UI issues you cannot reproduce? If yes, Frame B (Hotjar for heatmaps and session replay, Userback for annotated screenshots, Instabug for native mobile).

- Do you need structured answers to specific questions at specific moments? If yes, Frame A (Survicate for lightweight, Sprig for research-grade).

- Are you already tracking usage and want feedback tied to user behavior? If yes, Frame D (Pendo if budget allows, Amplitude if you already use it).

- Do you need to aggregate what users want built next? If yes, Frame E (Feeqd, Featurebase, or Canny).

If the dominant job is customer conversations rather than any of the above, Frame C (Intercom). Most teams start with one frame and add a second within six months. The common combinations I see working: Frame E for prioritization plus Frame A for post-ship validation, or Frame B for bug depth plus Frame E for feature intake.

What About Website vs App vs Mobile?

Scope matters and the labels are slippery.

Website-only feedback (marketing site, landing page, static content): see our cluster post on best feedback widgets for websites for the scoped comparison. Different tool set than in-app.

In-app, meaning web app or SaaS product: the 5 frames above cover it. All the tools listed work inside a web application.

Native mobile apps (iOS/Android): the SDK tier narrows significantly. Instabug / Luciq is the practical standard, with a mature SDK that bundles crash reporting, in-app surveys, and session replay. Alchemer Mobile (formerly Apptentive) is the enterprise alternative, stronger on customer lifecycle campaigns inside the app. Hotjar works on mobile web (your product opened in mobile Safari or Chrome) but not as a native SDK, so if your app is React Native or Swift-only, Hotjar is not the fit. This SDK fragmentation is why mobile teams often pick a different in-app feedback tool than web teams even inside the same company.

Feature requests that span all three: Feeqd, Canny, and Featurebase all work across surfaces because they operate at the board level rather than the SDK level. Users reach the board through a link or a widget on any surface.

If you want the architectural overview of how widgets fit into an in-app feedback stack, our feedback widget pillar covers it.

Common Mistakes Picking In-App Feedback Tools

Picking from a top-10 list without identifying your frame. Top-10 lists give you tools. They do not give you frames. Start with frame, end with tool.

Overbuying (buying Pendo for a team of 10). Pendo is powerful. It is also quote-based with contracts that start in the low five figures annually. A 10-person startup can get 80% of what they need from Amplitude's free tier plus a $19/month feedback board. Buy Pendo when you have 50+ people and a PM-dedicated data analyst.

Underbuying (using an NPS tool when you need visual bug reports). The inverse mistake. An NPS survey will never tell you where the checkout flow breaks. If users are hitting bugs you cannot reproduce, Frame B is the answer, not a better survey tool.

Not checking mobile native support. Many tools branded as "in-app feedback" are web-only. If your product is a native mobile app, verify iOS/Android SDK support before you sign. The Frame B mobile specialists (Instabug, Alchemer Mobile) exist for a reason.

FAQ

What are in-app feedback tools?

In-app feedback tools are software solutions that collect user feedback, feature requests, or bug reports directly inside a web or mobile application without sending users to an external form. They split into five frames: micro-surveys (Survicate, Qualaroo, Sprig), visual feedback and bug reporting (Hotjar, Userback, Instabug), in-app messaging (Intercom), analytics with feedback (Pendo, Amplitude), and feature requests with voting (Feeqd, Featurebase, Canny). The right tool depends on which frame matches your primary decision.

What is the simplest way to add feedback to my app?

The simplest approach for the first 10 users is often a "Send Feedback" link in your settings that opens a pre-filled email. It takes 20 minutes to build and captures early signal. Once volume passes roughly 5 submissions per week, you need aggregation, tagging, and ideally voting. At that point an embeddable widget with a feedback board (Feeqd has a free plan with one board and unlimited feedback) is a better fit than an overflowing inbox.

Is there a free in-app feedback tool?

Yes. Feeqd has a free plan with one board and unlimited feedback entries. Amplitude has a free tier up to 10 million events per month that includes basic in-product surveys. PostHog is open-source and free to self-host with a generous cloud free tier. Free tiers from Hotjar and Survicate exist but are more limited on sessions and responses.

Do I need a dedicated tool or can I use email and Typeform?

Email plus Typeform is fine for the first 20 to 50 feedback submissions. It breaks down once multiple users request the same thing, because email and Typeform do not deduplicate, do not aggregate demand, and do not support voting. If you are shipping a product and trying to prioritize from feedback, you need a board with voting. Typeform is a survey tool, not a product feedback system.

What is the best in-app feedback tool?

There is no single best tool because the category splits into five frames that solve different problems. For visual bugs and session replay, Hotjar. For structured surveys, Survicate or Sprig. For analytics plus feedback combined, Pendo. For feature requests with voting, Feeqd, Canny, or Featurebase. Pick the frame that matches your primary decision first, then compare the two or three tools inside that frame.

Should I trust Reddit recommendations over tool listicles?

Reddit recommendations tend to be more honest than vendor-written listicles but are also frame-biased: a thread in r/iOSProgramming will push you toward mobile SDKs like Instabug, while a thread in r/SaaS will push you toward Pendo or Canny. Both can be right, both can be wrong for your situation. The better pattern is to identify your frame first (Frame A-E), then cross-reference Reddit discussions inside that frame. Conflicting advice across threads usually means the commenters are in different frames, not that they are wrong.

Conclusion

Most in-app feedback tool failures are frame mismatches, not tool mismatches. Identify the frame first, pick a tool inside it second, and plan for adding a second frame in six months.

For the broader voice of customer category (the tier above in-app tools), see our sister post on voice of customer software. For the pillar that covers the widget architecture generally, see feedback widget. For a tool that bundles voting boards, in-app widgets, and public roadmaps (Frame E plus A-lite plus roadmap) in one transparent plan, try Feeqd free. One board, unlimited feedback, no credit card.

Get started with Feeqd for free

Let your users tell you exactly what to build next

Collect feedback, let users vote, and ship what actually matters. All in one simple tool that takes minutes to set up.

Sign up for free