How to ask for feedback from customers: pick the right channel for the moment, write a short prompt that states what you'll do with the answer, and respect a timing rule so you don't burn the channel. This guide gives you the scripts, the timing, and the templates, sorted by channel and goal.

Most advice on asking for feedback stops at "ask at the right time" and moves on. That's not useful. After two years running Feeqd, I've tested feedback prompts across five channels with thousands of users, and the difference between a 2% response rate and a 20% response rate is never "timing" in the abstract. It's whether the prompt matches the channel, the moment, and a clear ask.

One thing to disambiguate up front: "feedback" and "reviews" are two different asks. If you want public 5-star ratings for Google or Yelp, that's a review request, a transaction where the customer trades their time for your SEO boost. If you want product input to improve what you build, that's a feedback request, a trade where you promise to act on what they say. The scripts, timing, and expectations are different for each. This post covers product feedback in depth, with a short section at the end for review requests.

The 3 Timing Rules That Determine Response Rate

Most failed feedback requests break one of these rules before the user even reads the prompt.

Rule 1: Ask when the experience is fresh. A customer's memory of a specific interaction decays fast. For support tickets, asking within 24 hours of resolution pulls 3–5× the response rate of asking a week later. For onboarding CSAT, asking within the first session beats asking at day 7. The fresher the memory, the more specific the feedback.

Rule 2: Ask where the moment happens. If the experience was in-app, ask in-app. If it was over email, ask over email. Sending a Typeform survey link over email to ask about a widget interaction loses users at every hop. Channel friction kills response rates.

Rule 3: Ask at most once per meaningful interval. The interval varies by channel: NPS quarterly, CSAT after each support resolution, feature request prompts on-demand. Users don't resent being asked for feedback. They resent being asked for the same feedback twice in a week.

None of these rules are about "politeness." They're about information freshness, channel fit, and respect for attention. Apply all three and your feedback program stops looking like survey spam.

How to Ask for Feedback From Customers: 5 Channels That Work

Each channel serves a different feedback goal. Use the wrong channel for the wrong goal and you'll either get silence or misleading answers. The rest of this guide walks through all five, with scripts and when to use each.

Channel 1: In-app feedback widget (always-on, contextual)

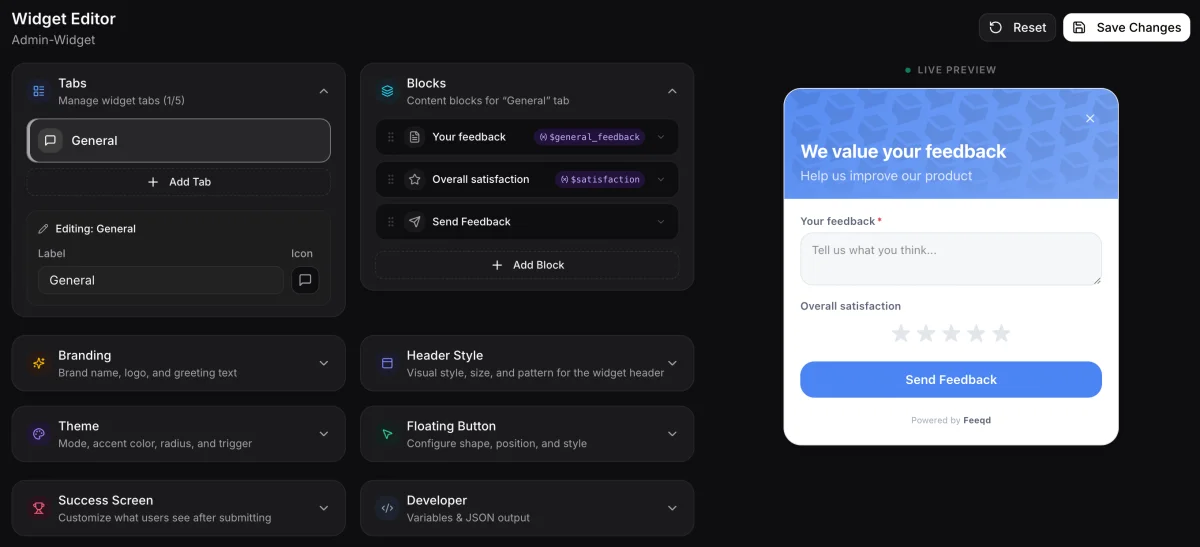

The in-app widget is the default channel for product feedback when the customer is actively using your product. A floating button that opens a short form on click is the simplest version. It captures feature requests, bug reports, and feature-specific frustration while the context is live.

When to use it: continuous collection of product feedback from active users. Best for feature requests and bug reports.

Script example (widget label): "Suggest a feature" or "Share feedback."

Form fields: one required text field ("What would you change?"), optional category dropdown (feature request, bug, other), optional email for follow-up. No more than 3 fields.

Timing rule: always available, never triggered unsolicited. The customer chooses the moment.

The widget works because it's frictionless when motivation is high. If a user hits a wall on a specific feature, they click the widget right there. No email, no redirect, no delay. Our own feedback widget is the pattern we run at Feeqd: 18KB, floating button, one field, posts directly to our board.

Channel 2: Post-interaction email (tactical, immediate)

The post-interaction email targets a specific completed experience: a support ticket resolution, a purchase, an onboarding milestone, a cancelled subscription. It's the right channel for CSAT-style satisfaction questions tied to one event.

When to use it: after a specific, bounded interaction. Never as a generic "we'd love your feedback" blast.

Script example (post-support email):

Hi [Name], we closed ticket #4312 this morning. On a scale of 1–5, how would you rate the resolution? [1] [2] [3] [4] [5]. One click, no login required.

Timing rule: within 24 hours of the interaction. After 48 hours, response rates drop fast.

The two mistakes to avoid: (1) asking an open question ("How was your experience?") that requires the customer to draft paragraphs, and (2) asking multiple questions in one email. One question per post-interaction email. Multi-question surveys belong in a different channel.

Channel 3: NPS survey prompt (quarterly, relationship)

NPS (Net Promoter Score) is the channel for a relationship-level loyalty question. It runs quarterly, reaches the whole active base, and gets you a directional number plus qualitative follow-ups.

When to use it: once per quarter, maximum. Ask your active user base (not trialists) to rate 0–10, then ask one open follow-up.

Script example (in-app or email NPS):

On a scale of 0–10, how likely are you to recommend [Product] to a colleague? [Optional follow-up after rating]: What's the main reason for your score?

Timing rule: fixed quarterly cadence. Don't trigger NPS off-cycle for specific events; that's what CSAT is for.

The math on NPS is well-documented but often misapplied. Our NPS calculator walks through the formula, and the deeper framing of when NPS beats CSAT is in our NPS vs CSAT comparison. For most product teams, the NPS number matters less than the open-text follow-up. That's where the actionable signal lives.

Channel 4: Exit / churn survey (high-signal, low-response)

When a customer cancels or downgrades, they're leaving with the information you most need. Exit surveys capture the reason at the moment of decision. Response rates are modest (5–15% is normal), but the respondents give you the highest-signal feedback you'll ever collect.

When to use it: immediately on cancel flow, before the cancellation is finalized.

Script example (cancel flow screen):

Before you go, what's the main reason you're cancelling today? (Pick one, or skip) ☐ Too expensive for my needs ☐ Missing a feature I need: _______ ☐ Found an alternative: _______ ☐ Not using it enough ☐ Other: _______ [Skip] [Submit and cancel]

Timing rule: inline with the cancel flow. Never email a churned user a survey two weeks later. They've moved on and won't reply.

A key detail: don't block the cancellation behind the survey. Let them skip. A mandatory survey before cancel feels hostile and will spike negative reviews.

Channel 5: 1-on-1 customer interview (qualitative, scheduled)

Interviews are the channel for depth. You're not collecting a score or a feature vote. You're trying to understand the underlying need behind a behavior or a piece of written feedback.

When to use it: monthly cadence, 3–5 interviews per cycle. Target power users, churned users, or users whose usage patterns are outliers.

Script example (interview invite email):

Hi [Name], I'm [Your name], the [Founder / PM] at [Product]. I'm running a round of 20-minute conversations with a handful of users this month to understand how you're using [Product] and what's not working. Would you be up for a call this week or next? As a thank you, I'll comp your next month on us.

Timing rule: opt-in, scheduled. Never cold-call.

Interviews are the only feedback channel where you're asking for significant time. The incentive (a comped month, a gift card, early access to a feature) is table stakes. Without it you'll book 20% of your target; with it you'll book 80%.

Copy-Paste Templates by Situation

These are the exact templates I use. Modify the bracketed fields and ship.

Template 1: Post-support CSAT email (one question, one click)

Subject: How did we do on ticket #[ID]?

Hi [Name],

We marked ticket #[ID] as resolved this morning. Quick one: how would you rate the support?

[😀 Great] [😐 Okay] [🙁 Not great]

Thanks, [Your name]

Template 2: Post-onboarding check-in (sent day 7, opens a conversation)

Subject: One week in, how's it going?

Hi [Name],

You joined [Product] a week ago. Real quick: what's the one thing that's been harder than you expected?

Hit reply with a sentence and I'll read it myself.

[Your name], [Founder / PM] at [Product]

Template 3: Feature request follow-up (close the loop after a user submits)

Subject: Update on your feature request: [title]

Hi [Name],

Quick update on the [title] request you submitted. [We shipped it / It's on the roadmap for Q2 / We've decided not to pursue it because X].

Thanks for taking the time to share it. The roadmap is built on input like yours.

[Your name]

Template 4: NPS quarterly email

Subject: A 15-second question about [Product]

Hi [Name],

On a scale of 0–10, how likely are you to recommend [Product] to a colleague?

[0] [1] [2] [3] [4] [5] [6] [7] [8] [9] [10]

(One click, no form, no login.)

Thanks, [Your name]

Template 5: Interview invite

Subject: 20 minutes to trade notes?

Hi [Name],

I'm running short conversations this month with [Product] users who've [criteria: been active 60+ days / cancelled last month / hit feature X regularly]. Your usage pattern stood out.

Would you be up for a 20-minute call next week? I'd love to hear what's working and what isn't. As a thank you, I'll comp your next month.

[Your name]

[Calendly / Cal.com link]

Every template shares a structure: a specific subject line, one ask, a short body, no filler. Long emails from vendors get ignored. Short emails with one clear ask get answered.

How to Ask for Reviews (Different From Feedback)

If what you actually want is a public 5-star review on Google, G2, Capterra, or Product Hunt, the ask is different. Reviews are a marketing asset; feedback is a product input. Don't mix the two.

Rule: only ask users who've told you they're happy. Asking a cold customer for a public review gives you a negative one. Use NPS or CSAT as a filter: promoters (NPS 9–10) and high-CSAT respondents are the pool to ask for a review.

Script example (post-positive-NPS email):

Hi [Name], thanks for the 10 on our recent NPS question. If you have 60 seconds and feel like helping us out, would you mind leaving a quick note on [G2 / Product Hunt]? Here's the link: [URL]

The ask is optional, gated by positive signal, and single-link. Never ask the whole user base for reviews, because you'll train your least-happy users to leave them. Reddit r/CustomerSuccess has repeated threads on this pattern, and the consensus is consistent: the 10/5/3 rule (engage at 10 feet, greet at 5, assist at 3) is a hospitality heuristic, not a feedback framework, but its principle applies here: proximity before ask.

Response Rate Benchmarks

What to expect, by channel, for a reasonably healthy product with engaged users:

| Channel | Realistic response rate | Notes |

|---|---|---|

| In-app widget | 0.5–2% of MAU per week | Low per-user rate, but always-on |

| Post-support CSAT email | 20–35% | Within 24 hours of resolution |

| NPS quarterly email | 15–25% | Higher if sent in-app |

| Exit / churn survey | 5–15% | Low response, high signal |

| Interview invite (with incentive) | 40–80% of targeted users | Depends on the incentive |

| Cold "share your feedback" blast | 1–3% | Avoid, signal is poor |

If you're below these numbers, the issue is almost always prompt quality or timing, not channel choice. Long prompts, multi-question surveys, or asks disconnected from a recent interaction suppress response rates across every channel. Nielsen Norman Group's research on survey length confirms the pattern: completion rates drop sharply as surveys extend past a handful of questions.

Common Mistakes

Asking everyone the same question. A new trialist, a two-year power user, and a churned customer need different questions. Segment before you ask.

Treating feedback as a one-way capture. If users submit feedback and never hear back, the channel dies within two quarters. Every feedback submission needs an acknowledgment and, for feature requests, a status update when the decision is made. The how to close the feedback loop guide covers the mechanics.

Over-asking. I've seen products send NPS, CSAT, feature surveys, and re-engagement emails to the same user in the same week. Users learn to ignore feedback prompts. Respect the interval rule above.

Asking open questions when you need aggregate data. "What would you change about our product?" is great for a 1-on-1 interview. It's terrible for a 10,000-user blast because you can't aggregate free-text responses without a research budget. For aggregate demand on specific features, a voting board is a better tool: feature voting boards convert individual requests into ranked signal automatically.

Confusing reviews and product feedback. This is the mistake I see most. Conflating the two leads to reviews that are really feature requests (wasted on G2) and feature requests that are really marketing asks (wasted on your roadmap).

FAQ

How do you politely ask for feedback?

Politeness in feedback requests isn't about tone. It's about brevity and specificity. The most respectful prompts are the shortest ones, ask one question, make the response a single click or a sentence at most, and state what you'll do with the answer. "Hi [Name], we closed your ticket this morning, quick 1–5 rating on the resolution?" is more polite than a four-paragraph apology followed by a multi-question survey. Short, specific, and one ask. That's the formula.

What is the best way to ask for feedback from customers?

The best way depends on what you're trying to learn. For product feature requests, the best channel is an in-app widget or a voting board: both let users submit in the moment and aggregate demand. For satisfaction with a specific interaction, a post-interaction email with a 1–5 scale works best. For loyalty and relationship signal, a quarterly NPS survey. For underlying needs, a scheduled 1-on-1 interview with an incentive. There is no universally "best" method. The right method is the one that matches the question you need answered. If you want a multi-channel framework, our user feedback collection guide covers the full picture.

What is the 10/5/3 rule in customer service?

The 10/5/3 rule is a hospitality heuristic: acknowledge a customer at 10 feet of distance (eye contact), greet them at 5 feet (verbal greeting), and offer assistance at 3 feet (direct help). It comes from hotel and retail service traditions, not from product feedback. The principle that transfers to digital feedback is proximity before ask: warm up the relationship (through good onboarding, support, and positive interactions) before requesting feedback, and the response rate climbs. Don't ask a user you've never talked to for a long survey on day one.

What are the 3 C's of customer satisfaction?

The 3 C's typically refer to Consistency, Convenience, and Communication: the three levers McKinsey and other consultancies identify as drivers of customer satisfaction. They're a strategic frame, not a feedback technique. For a product team, the practical translation is: consistent experiences generate fewer complaints, convenient interactions earn higher CSAT, and clear communication (including closing the feedback loop) compounds both. It's a lens for what to improve, not a script for how to ask about it.

How often should you ask customers for feedback?

Cadence depends on the channel. In-app widgets run continuously (the user picks the moment). Post-interaction CSAT runs after every meaningful interaction. NPS runs once per quarter. Interviews run monthly, 3–5 conversations per cycle. Exit surveys trigger on the cancel event. The rule across all channels: no single user should see more than one prompt per week across your entire feedback program. Track your send volume per user, not just per channel, or you'll over-ask without realizing it.

Closing Thought

Asking for feedback well is a matter of matching channel to moment, writing a short and specific prompt, and closing the loop when responses come in. No elaborate framework is required, just the discipline to pick the right channel, ask one question at a time, and follow up on what users share.

If you're setting up your feedback collection from scratch, the user feedback collection pillar walks through the full multi-channel strategy, and the types of customer feedback framework maps each type to the roadmap decision it unlocks. Pair those with the channels and scripts above and you have a working feedback program, no survey software required.

Get started with Feeqd for free

Let your users tell you exactly what to build next

Collect feedback, let users vote, and ship what actually matters. All in one simple tool that takes minutes to set up.

Sign up for free