The types of customer feedback are the categories of input users provide, organized across two classic taxonomies (direct vs indirect, structured vs unstructured) and a third practical layer: the decision each type unlocks. Most articles stop at the first two. Product teams need the third.

Most articles on this topic classify and stop. They list NPS, CSAT, CES, direct, indirect, and move on. That framing is fine for CX teams. It's insufficient for product teams, who don't just need to know the types. We need to know what each type lets us do.

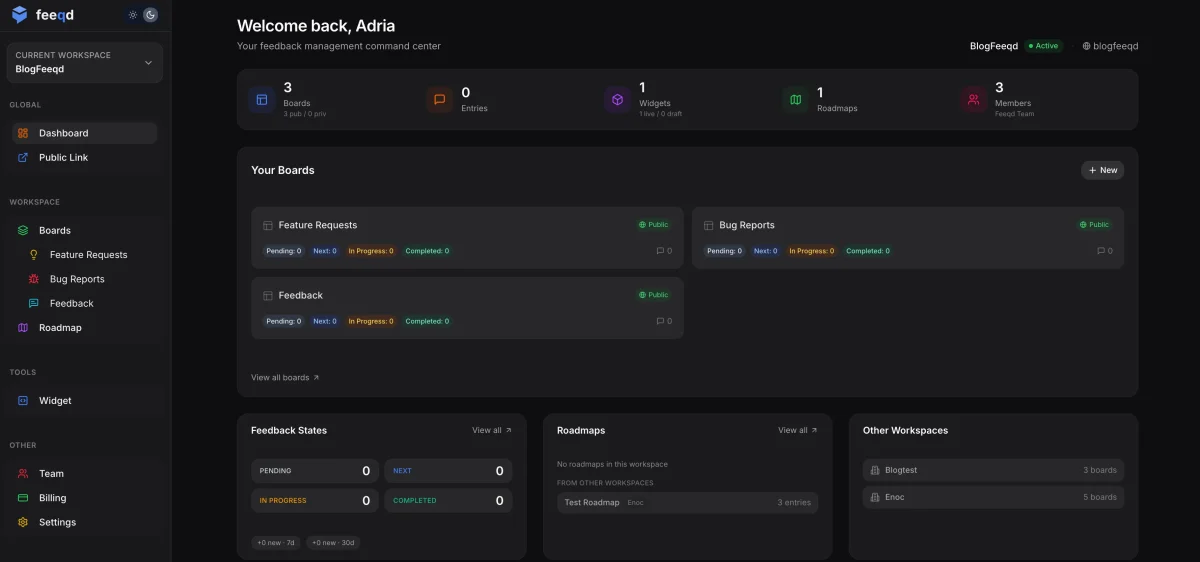

After two years building Feeqd, I've watched the same pattern repeat: teams collect across five or six feedback types, can't map any of them to a concrete roadmap decision, and conclude that feedback "doesn't scale." The problem isn't volume. It's that nobody connected type to action.

This article covers the different types of customer feedback, the two taxonomies everyone uses, and then a third layer product teams actually need: a type-to-decision mapping. Eight types, each with a signal it generates and a decision it unlocks.

Two Quick Taxonomies (And Why They're Not Enough)

The two dominant ways to classify feedback show up in almost every article on this topic:

- Direct vs Indirect feedback. Direct is feedback customers give you intentionally: surveys, interviews, submitted feature requests, NPS responses. Indirect is feedback you infer without asking: support ticket patterns, product usage data, social media mentions, churn behavior.

- Structured vs Unstructured feedback. Structured has a fixed format and aggregates cleanly: ratings, multiple choice, scores. Unstructured is free text, conversation, or behavior that needs interpretation before it becomes a metric.

Both frameworks are useful for describing how feedback arrives. Neither tells you what to do with it. A support ticket (indirect, unstructured) and a feature vote (direct, structured) can both point to the same roadmap item, or to two completely different ones. The classification alone doesn't separate them.

The rest of this post uses a third layer: feedback grouped by the decision each type unlocks. That's the layer that matters when your goal is shipping features users care about, not filling dashboards.

The 8 Types of Customer Feedback Product Teams Actually Use

What are feature requests with voting?

Feature requests are the most direct product input you can collect. A user tells you what they want built, and other users upvote to confirm demand.

Signal it generates: aggregate demand for specific functionality, weighted by vote count and segment.

Decision it unlocks: roadmap prioritization. A 40-vote request on our own board is a very different signal from a single Slack message, and the voting structure is what makes the difference.

Feature requests only work as a signal when collection is structured. Free-text email requests don't aggregate. A public feedback voting board with deduplication and voting converts one-off requests into ranked demand. For the operational side, feature request tracking covers the full intake workflow.

What is NPS (Net Promoter Score)?

NPS asks one question: how likely is the customer to recommend your product on a 0–10 scale. It's a loyalty indicator, not a feature finder.

Signal it generates: directional change in customer loyalty over time. Useful as a trend, not as an absolute score.

Decision it unlocks: identify churn drivers, commit to one or two quarterly bets. If NPS drops from 42 to 30 in a quarter, something shifted. The follow-up open text question tells you whether it's pricing, a buggy release, or a competitor.

NPS is misused more than any other feedback type. Product teams chase NPS lifts as a goal in themselves, which pushes toward cosmetic changes instead of real improvements. Use it as a canary, not a KPI. Our NPS calculator handles the math cleanly.

What is CSAT (Customer Satisfaction)?

CSAT measures satisfaction with a specific interaction: a support ticket resolution, an onboarding flow, a checkout experience. It's bounded and contextual.

Signal it generates: satisfaction with a single touchpoint, usually on a 1–5 or 1–10 scale.

Decision it unlocks: fix that specific touchpoint. CSAT dropping 8 points after a release once told us we had a problem. When we dug in, it wasn't the product. It was a docs gap we found by cross-referencing support tickets. The decision was a docs rewrite, not a product pivot.

CSAT doesn't tell you whether to build feature X. It tells you whether a specific experience is working. Use our CSAT calculator to compute scores across cohorts.

What is CES (Customer Effort Score)?

CES asks how easy it was to accomplish something. "How much effort did it take to get your issue resolved?" on a 1–5 or 1–7 scale.

Signal it generates: friction in a specific flow. High effort means users are struggling.

Decision it unlocks: UX and usability improvements on specific flows. CES maps most cleanly to design work. If onboarding CES is low, you fix onboarding. If support CES is low, you streamline the support experience.

CES is underused by product teams because it's most common in support contexts. It translates well to product flows too. Our CES calculator walks through the formula.

What are product reviews and social mentions?

Reviews on G2, Capterra, Product Hunt, and organic social mentions are unsolicited public feedback. They reach buyers before they reach you.

Signal it generates: brand perception, trust signals, and public objections.

Decision it unlocks: marketing, sales enablement, and brand positioning. Reviews rarely translate to direct roadmap input. A single G2 review of "needs better reporting" is a weaker demand signal than three upvotes on a specific reporting request on your own board, because the review author isn't in a structured voting system with other users.

Use reviews to surface objections for sales and catch sentiment shifts early. Don't mistake them for a prioritization input.

What are support tickets as a feedback type?

Support tickets are indirect feedback. Users didn't set out to tell you what to build. They set out to solve a problem, and in doing so, they revealed a gap.

Signal it generates: patterns of friction, confusion, and missing functionality, grouped by root cause.

Decision it unlocks: bug fixes, documentation improvements, and adjacent feature needs. A ticket category that spikes after a release means the release has an issue. A ticket category that recurs quarter after quarter points to a docs or UX problem.

The operational move here is tagging. Support tags tickets by product area and by whether they contain a feature request. Untagged tickets are noise; tagged tickets are the richest indirect feedback stream a product team has.

What is in-app behavior and usage data?

Usage data is indirect and quantitative. You observe what users do: which features get opened, which flows get abandoned, which segments engage daily versus monthly.

Signal it generates: validation or invalidation of feature hypotheses. If 3% of users open the new dashboard in the first week after launch, the hypothesis that users wanted this dashboard is weakly supported at best.

Decision it unlocks: double down, iterate, or sunset. Usage data also separates vocal feedback from actual demand. A feature requested loudly but never used after shipping is a lesson.

The caveat: usage data alone doesn't tell you why. Low adoption could mean the feature is bad, or users don't know it exists, or onboarding surfaces it at the wrong moment. Behavior invalidates hypotheses cleanly. It doesn't replace qualitative input.

What are user interviews as feedback?

User interviews and recorded sales or support calls are qualitative depth. Fifteen minutes with a customer tells you more about why they want something than a hundred survey responses.

Signal it generates: the underlying need behind a request. Users ask for solutions. Interviews reveal problems.

Decision it unlocks: discovery and feature framing. Interviews rarely drive "build X" decisions directly. They drive "rethink X" decisions, the kind that shape how a feature gets built, not just whether.

Two or three interviews per month across segments, sustained over a year, reshapes how a product team thinks about users. Our voice of customer techniques guide covers the interview methods that work best for product teams.

The Feedback Type to Decision Matrix

| Type | Best signal for | Decision it unlocks | Update cadence |

|---|---|---|---|

| Feature requests (with voting) | Aggregate demand | Roadmap prioritization | Weekly review, monthly prioritization |

| NPS | Loyalty trend | Churn driver investigation, quarterly bets | Quarterly |

| CSAT | Touchpoint satisfaction | Fix specific experiences | Continuous, per interaction |

| CES | Flow friction | UX and usability improvements | Monthly review per flow |

| Reviews and social | Brand perception | Marketing and sales enablement | Continuous monitoring |

| Support tickets | Bug and friction patterns | Bug fixes, docs, adjacent features | Weekly tag review |

| Usage data | Feature validation | Double down, iterate, or sunset | Weekly for launches, monthly steady-state |

| User interviews | Underlying needs | Discovery and feature framing | 2–3 per month, always-on |

The matrix is the point of this post. If you can't map a type to a decision and a cadence, you're collecting feedback that won't be used. At Feeqd we built our feedback board around exactly this mapping, because classification without action turns a feedback program from overhead into roadmap input.

Product Team vs CX Team: Why Classification Differs

Most content about feedback types is written by enterprise CX platforms. Their audience is CX teams with researchers, survey budgets, and quarterly review cycles. That audience needs a different lens than a 15-person product team does.

| Dimension | CX team | Product team |

|---|---|---|

| Primary goal | Improve customer experience | Ship features users need |

| Dominant types used | NPS, CSAT, CES, interviews | Feature requests, usage data, support patterns |

| Cadence | Quarterly survey cycles | Always-on continuous |

| Analysis depth | Dedicated researcher, NLP tooling | PM + lightweight tagging |

| Success metric | NPS/CSAT lift | Feature adoption, retention |

| Typical toolset | Medallia, Qualtrics, Sprinklr | Feedback boards, analytics, widget |

CX teams classify feedback to report on customer sentiment. Product teams classify feedback to decide what to build next. Both are valid. They just produce different content and different frameworks.

If you're a product team reading a Medallia or Qualtrics guide, a lot of the advice won't fit your operating model. This post assumes the product team lens, which is why Feature Requests is type #1 instead of NPS. For context on the sister question, our voice of the customer primer explains how the CX and product framings diverge at the program level.

Common Mistakes Product Teams Make With Feedback Types

Misclassifying the signal. A CSAT dip after a release is not always a feature gap. It can be a docs issue, a pricing issue, or a support SLA issue. Before jumping to product changes, map the signal to its actual source.

Overweighting one type. All-NPS teams shift toward loyalty cosmetics and miss feature gaps. All-feature-requests teams build what power users ask for and miss deeper needs that only interviews surface. A balanced portfolio across three or four types beats depth in one.

Treating a vocal minority as aggregate demand. Early on I treated a very loud minority as signal and shipped a feature two users had requested insistently. It never got real adoption. After that we built voting in as a hard filter. If five unique users don't vote on it, it's not a broad signal. It might still be the right call, but it's a different decision than "users are asking for this."

Forgetting to close the loop regardless of type. Users who submit feedback, whether a feature request, a CSAT response, or a support ticket, stop contributing if they never hear back. Every feedback type needs a loop closure mechanic. Our full guide on how to close the feedback loop covers the playbook, and for teams building the full intake system from scratch the feedback system guide walks through the infrastructure.

FAQ

What are the different types of customer feedback?

Customer feedback types split across two dimensions. By source and nature, feedback is Direct (surveys, interviews, submitted requests) or Indirect (behavior, support patterns, social mentions), and Structured (ratings, scores) or Unstructured (free text, conversations). By decision it unlocks, the eight types product teams use are: feature requests with voting, NPS, CSAT, CES, product reviews and social mentions, support tickets and help desk patterns, in-app behavior and usage data, and user interviews. The dimension matters less than the mapping from type to roadmap action.

What are the five types of feedback?

There is no universal five-type list for customer feedback. The "five types of feedback" framing usually refers to organizational or coaching feedback (evaluative, appreciative, coaching, corrective, directive), which comes from HR and management contexts, not customer feedback. Most product teams operate across six to eight customer feedback types, which this guide covers.

What are the 4 types of CX?

The four types of CX are reactive, proactive, predictive, and prescriptive experiences, a McKinsey strategic framing for how companies deliver customer experience. That is a classification of CX delivery modes, not a breakdown of customer feedback inputs. The two are related but distinct: customer feedback is the input; CX strategy is what you do with it at an organizational level.

Which type of customer feedback is most important for product teams?

Feature requests with voting, in my opinion, because they're the only type that aggregates demand and signals a specific action in one structure. A 40-vote request on a public board is both evidence of broad interest and a concrete starting point for scoping. Other types are necessary (NPS for loyalty context, interviews for depth, usage data for validation), but feature requests are the type that most directly translates to roadmap items. That said, no product team should run on a single type. The mix is what produces good decisions.

Closing Thought

The eight types of customer feedback are all valid. They become useful when you map each one to a decision and a cadence. Classification without action is archive work. The type-to-decision matrix earlier in this post is the part most teams skip.

If you're building your collection setup now, start with our user feedback collection guide for channel selection, and pair it with voice of customer techniques for the qualitative methods that deepen each type. Feedback types are the what. The collection strategy and techniques are the how.

Get started with Feeqd for free

Let your users tell you exactly what to build next

Collect feedback, let users vote, and ship what actually matters. All in one simple tool that takes minutes to set up.

Sign up for free