NPS vs CSAT, in one sentence: NPS measures long-term loyalty at the account level, CSAT measures satisfaction with a specific interaction, and product teams usually need both plus a third signal (feature requests with voting) that neither metric captures.

| Dimension | NPS | CSAT |

|---|---|---|

| Question | "How likely are you to recommend us, 0–10?" | "How satisfied were you with [specific thing], 1–5?" |

| Scope | Relational (overall product/brand) | Transactional (one interaction) |

| Scale | 0–10, converted to -100 to +100 score | 1–5 or 1–7, converted to % satisfied |

| Best for | Retention risk, loyalty trend | Specific touchpoint diagnosis |

| Cadence | Quarterly | After every meaningful interaction |

| Decision it unlocks | "Should we worry about churn?" | "Is this specific flow/experience working?" |

| Where it fails | Cosmetic fixes chasing score lifts; vague diagnosis | No loyalty/retention signal; per-touchpoint only |

That's the short answer. The rest of this article is the long answer: what each metric actually does, where both fall short, why NPS specifically is taking heat in 2026, and what a product team should run instead when the question is "what do we build next?"

After two years running Feeqd and watching product teams pick one metric and wish it answered every question, I've come to think the NPS vs CSAT framing is the wrong starting point. Both are diagnostic. Neither is prescriptive. The teams that over-invest in either of them tend to end up with a dashboard they can't act on. If you want the broader listening-side context, our what is voice of the customer primer frames where satisfaction metrics fit in the overall VoC program.

What NPS Measures (And What It Doesn't)

NPS (Net Promoter Score) is a one-question loyalty metric. Customers rate from 0 to 10 their likelihood to recommend your product. Scores of 9–10 are Promoters, 7–8 are Passives, 0–6 are Detractors. Subtract the percentage of Detractors from the percentage of Promoters and you get a number between -100 and +100.

What it does well: directional loyalty tracking. If your NPS drops from +42 to +28 over two quarters, something shifted in how customers feel about the product as a whole. The open-text follow-up ("What's the main reason for your score?") is where the useful signal lives; the score itself is just a trigger to read the comments.

What it doesn't do: tell you why, tell you what to fix, or tell you whether a specific feature is working. NPS is a lagging indicator of brand relationship. It doesn't know anything about your onboarding flow, your checkout, or whether feature X should ship next. Fred Reichheld's original 2003 HBR article that introduced NPS framed it as a retention predictor, not a feature prioritization tool. Teams that treat NPS as a product roadmap input are using it for something it wasn't designed for.

Our NPS calculator handles the math cleanly if you want to compute your own score without fighting a spreadsheet.

What CSAT Measures (And What It Doesn't)

CSAT (Customer Satisfaction Score) asks how satisfied a customer is with a specific interaction, on a 1–5 or 1–7 scale. "How satisfied were you with the support you received?" after a closed ticket. "How would you rate the checkout experience?" after a purchase. The score is the percentage of respondents who chose the top two boxes (4 or 5 on a 1–5 scale).

What it does well: diagnose specific touchpoints. If support CSAT is 82% and onboarding CSAT is 61%, you know where to invest. CSAT is the most actionable feedback metric because it comes tied to a concrete event. You know what the respondent was rating, when they rated it, and you can often fix the underlying issue because it's bounded.

What it doesn't do: predict churn, measure loyalty, or aggregate to anything relationship-level. A customer can rate every support ticket a 5 and still cancel because your pricing changed or a competitor shipped the feature they've been waiting for. CSAT is high-resolution on moments and blind to the bigger picture.

The CSAT calculator walks through the math if you want to compute it across cohorts.

Decision Matrix: When to Use Which

For most product teams, the answer isn't "pick one." It's "use both, for different questions." Here's how to decide which metric to reach for.

| Your question | Metric to use | Why |

|---|---|---|

| Are customers likely to churn this quarter? | NPS | Loyalty trend is the leading indicator |

| Is our new onboarding flow working? | CSAT | Bounded touchpoint, needs specific feedback |

| Is the support team performing well? | CSAT | Per-ticket satisfaction, actionable |

| Are we holding attention over 12 months? | NPS | Relational metric, longitudinal view |

| Did the recent price change hurt us? | NPS (open text) | Score drop + comments will surface it |

| Which feature to ship next? | Neither, use voting / feature requests | Neither metric captures demand for new functionality |

| How did this specific release land? | CSAT | Tied to a specific event |

| Should we invest in customer success? | NPS | Relationship metric justifies CS investment |

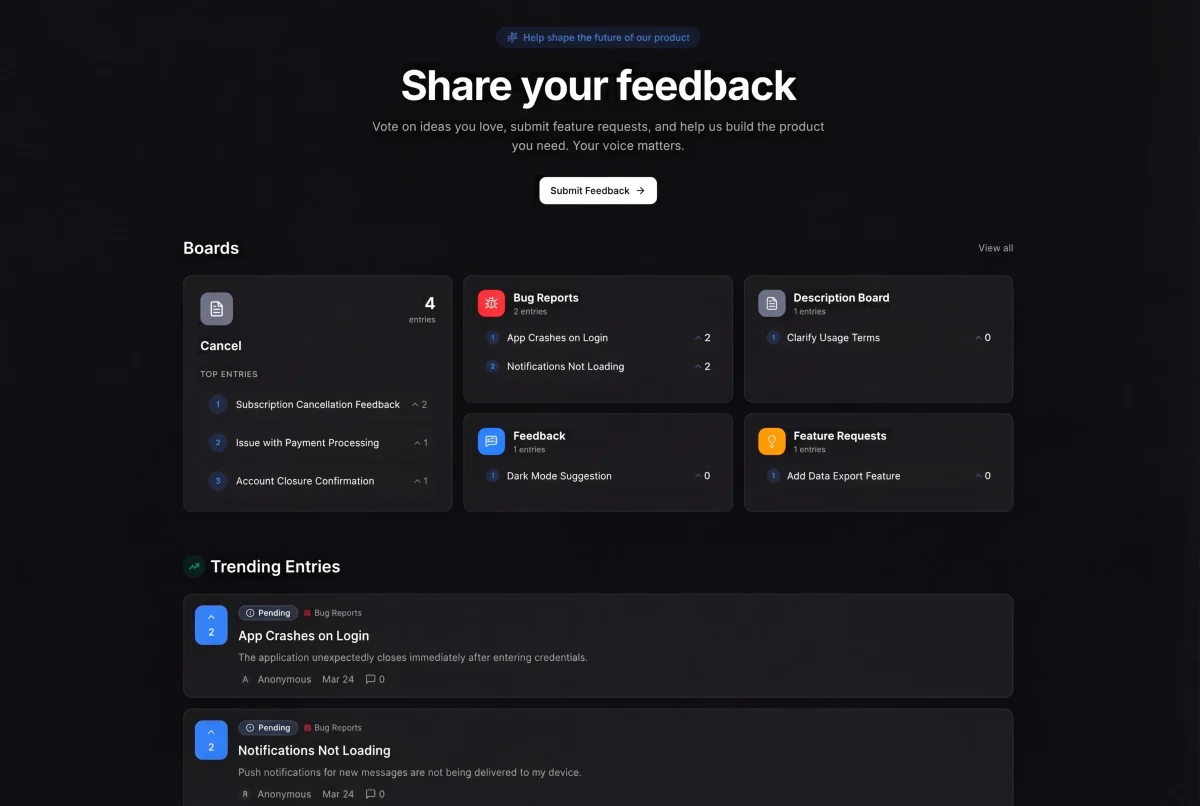

The last row is the one most product teams miss: "which feature to ship next" is not an NPS or CSAT question. Both metrics tell you about satisfaction and loyalty. Neither tells you whether to build dark mode, SSO, or a mobile app next quarter. That's a different signal entirely: structured demand from users, usually collected through a feature voting board where users submit and upvote the things they want built.

I've watched teams try to extract roadmap direction from NPS comments. It produces an unranked list of wishes that gets prioritized by gut feel. Voting boards beat that workflow because the signal is aggregated and ranked automatically.

A Quick Word on CES (Because the SERP Asks)

CES (Customer Effort Score) is the third metric that keeps showing up alongside NPS and CSAT. It asks how easy a specific task was, usually phrased as "How much effort did it take to resolve your issue?" on a 1–5 or 1–7 scale. CES is narrower than CSAT: it's specifically about friction, not satisfaction.

Use CES when the experience involves the user trying to accomplish something (resolve a support issue, complete onboarding, check out) and you want to isolate effort from outcome satisfaction. A customer can be satisfied with the resolution but unhappy about how hard it was to get there; CES catches that distinction.

For most product teams, CES is a support-context metric. If you're running it in-product, it works best on specific flows (onboarding, a complex checkout) rather than as a generic product score. The CES calculator shows the formula.

Why NPS Is Under Fire in 2026

If you've been reading product communities over the past year, you'll have noticed NPS taking heat. A handful of specific critiques keep coming up, and they're worth understanding before you build a program around it.

Critique 1: the score incentivizes cosmetic changes. When NPS becomes a team KPI, people optimize for the number. That often means small delighters (nicer empty states, polish passes) rather than structural improvements. A 3-point NPS lift from cosmetics is easier to claim than a 3-point lift from rebuilding onboarding. The metric rewards what's cheapest to move.

Critique 2: survey fatigue has eroded response quality. NPS was designed when surveys were rare. Today, every app sends one. Response rates have fallen, and the remaining respondents skew toward the extremes (very happy or very angry), which distorts the score.

Critique 3: the 0–10 scale is culturally biased. In the US, people treat 7 as "good." In Japan or Germany, 7 is often the default "neutral." If you run NPS across geos without calibration, the numbers aren't comparable. Most teams don't calibrate, and silent segment bias enters the score.

Critique 4: the "recommend" question is increasingly artificial. The original NPS question assumed word-of-mouth was the dominant growth channel. For B2B SaaS with long sales cycles, self-serve signup, or API products, "would you recommend?" is a weird question: half the respondents aren't in a position to recommend anything.

The "NPS is outdated" framing now has enough traction that Google's AI Overview surfaces the critique alongside practitioner threads on Reddit r/CustomerSuccess and sites like NPSIsTheWorst.com. The meta point: NPS is still useful as a trend indicator, but the practice of treating it as a north star metric is being reconsidered industry-wide.

That doesn't mean drop NPS. It means run it without treating its number as the goal.

Should You Run Both NPS and CSAT?

Yes, if you can afford the overhead of running and reviewing two metrics. The two are complementary: NPS catches relationship drift quarterly, CSAT catches per-touchpoint issues continuously. Running both gives you a day-to-day signal (CSAT) plus a long-term signal (NPS), with each answering a question the other can't. The only reason to run just one is resource constraint, and if that's the case, CSAT is the more actionable choice because it's tied to fixable events. Just don't treat either metric's number as a KPI target on its own.

Why Product Teams Need a Third Metric

The underrated insight in the NPS vs CSAT debate is that both metrics are listening metrics. They measure how customers feel about what you've already built. Neither tells you what to build next.

For product teams, that gap is the most expensive one. You can have +50 NPS, 85% CSAT, and still ship the wrong feature because nobody told you what users actually wanted. Structured feature demand (a voting board, a feature request inbox with deduplication and upvoting) is the metric that fills this gap.

At Feeqd we ran NPS internally for about six months and eventually deprioritized it. Not because the score was bad, but because the score didn't change our roadmap. What did change our roadmap: a 40-vote request on our public feedback board. That was a concrete decision unlocked by a concrete signal, and it wasn't captured by any satisfaction metric.

The product team feedback stack that holds up in practice looks like this:

| Signal | Metric / tool | What it answers |

|---|---|---|

| Loyalty trend | NPS (quarterly) | Are we holding the relationship? |

| Touchpoint health | CSAT (continuous) | Is this specific experience working? |

| Aggregate feature demand | Voting board / feature requests | What do we build next? |

| Flow friction | CES (per flow) | Where are users struggling? |

| Qualitative depth | Customer interviews | Why does this pattern exist? |

NPS and CSAT cover two of the five. Covering the other three is how product teams move from reactive satisfaction management to proactive roadmap building. For the full program-level framing, voice of customer analytics walks through how product teams structure the listening side end-to-end, and voice of customer techniques maps the qualitative methods that feed NPS and CSAT. On the collection side, user feedback collection covers the multi-channel setup and types of customer feedback maps each signal to the decision it unlocks.

Common Mistakes With NPS and CSAT

Running both without tying either to a decision. A dashboard is not a program. If your NPS score comes in and nothing happens as a result, the measurement is overhead. Every NPS cycle needs a pre-committed action: "if the score drops by more than 5 points, we investigate the comments and commit to one quarterly bet."

Chasing the NPS number as a goal. See critique 1 above. NPS is a trend signal, not a KPI to lift. Teams that set "improve NPS by 10 points this year" targets usually ship cosmetic changes and burn out on measurement.

Confusing CSAT for product satisfaction. CSAT is per-touchpoint. A customer can CSAT-rate your support flow a 5 every time and still be unhappy with the product overall. Don't roll up per-ticket CSAT and call it "product satisfaction."

Under-using the follow-up text. The open-text "why?" field in both NPS and CSAT is where the diagnostic value lives. Some teams don't read it. A quarterly review of the text comments is more useful than the headline score.

Missing the voting signal entirely. Most product teams collect NPS and CSAT, some add CES, and stop there. The gap is structured feature demand. Closing that gap with a voting mechanism is what makes the rest of the metrics actionable on the roadmap. Pairing it with how to close the feedback loop turns the collected signal into a visible commitment.

FAQ

Are NPS and CSAT the same?

No. NPS measures long-term loyalty at a relational level ("would you recommend us?" on a 0–10 scale, asked quarterly). CSAT measures satisfaction with a specific interaction ("how satisfied were you with [specific thing]?" on a 1–5 scale, asked after the interaction). They have different scales, different scopes, different cadences, and answer different questions. A product with high CSAT can still have low NPS if customers are satisfied with individual interactions but unhappy with the overall relationship. The two metrics are complementary, not interchangeable.

How do you calculate NPS from CSAT?

You don't, not reliably. NPS and CSAT measure different things and use different scales, so there's no valid formula to derive one from the other. Some teams attempt rough mappings (e.g., CSAT 5 responses ≈ NPS Promoters), but this loses the relational vs transactional distinction that makes NPS useful. If you need both, run both as separate surveys. If you only want to run one, pick based on the question you need answered: NPS for loyalty trend, CSAT for touchpoint diagnosis.

What is the difference between NPS and customer satisfaction?

NPS (Net Promoter Score) specifically measures loyalty and willingness to recommend, on a 0–10 scale. "Customer satisfaction" is the broader concept, and CSAT (Customer Satisfaction Score) is the specific metric that measures it, typically on a 1–5 scale tied to a concrete interaction. NPS asks about relationship; CSAT asks about an experience. You can have satisfied customers who wouldn't recommend you (they're happy but not enthusiastic), or enthusiastic customers with occasional CSAT dips (they love you overall but had a bad support ticket). The metrics measure overlapping but distinct things.

Why is NPS outdated?

The critique of NPS in 2026 centers on four points: the score incentivizes cosmetic optimization rather than real improvement, survey fatigue has lowered response quality, the 0–10 scale has cultural biases that distort cross-geo comparisons, and the "recommend" question doesn't fit many modern product contexts (B2B SaaS, self-serve tools, API products). Publications like CMS Wire and communities like NPSIsTheWorst.com have made the case that NPS has been over-elevated from a useful trend indicator to an inappropriate north-star metric. NPS isn't useless, but treating its number as a goal has fallen out of favor. Use it as a signal, not a KPI.

NPS vs CSAT vs CES: which to pick?

All three answer different questions. NPS is relational loyalty (use quarterly, for churn risk). CSAT is satisfaction with a specific interaction (use after every meaningful touchpoint). CES is effort on a specific task (use on support resolutions, onboarding, checkout, or any flow where friction is the concern). For a product team, the practical rule: run NPS quarterly, run CSAT after meaningful interactions, and add CES on flows where you specifically care about friction. If you have to pick only one, CSAT is the most actionable because it comes tied to a specific event you can fix. And if the real question is "what feature to build next," none of the three answers it. That's a voting/feature-request question.

Closing Thought

NPS vs CSAT isn't really a versus. Both metrics earn their place in a product team's toolkit, each answering a question the other can't. The trap is treating either as the answer to every question, especially the roadmap question. Loyalty scores and satisfaction scores are listening metrics. Building the right product also needs a demand metric, and that's where voting boards and structured feature requests fill the gap the satisfaction metrics leave open.

For the collection side, the user feedback collection guide walks through the multi-channel setup, and our guide on asking customers for feedback covers the scripts and timing for each channel. Pair those with a voting mechanism and you have what NPS and CSAT alone can't give you: a decision system, not just a dashboard.

Get started with Feeqd for free

Let your users tell you exactly what to build next

Collect feedback, let users vote, and ship what actually matters. All in one simple tool that takes minutes to set up.

Sign up for free