Voice of customer techniques are the methods product teams use to capture customer input (voting boards, widgets, surveys, interviews, support ticket mining, usability testing) and translate it into roadmap decisions. The right technique depends on the decision you're trying to make.

Most articles on this topic give you a list of 10 to 13 methods and leave you to figure out which runs when. That is the wrong order. Pick the wrong technique for a decision and you either drown in data or collect the wrong kind of signal.

This post inverts the usual structure. I list 10 VoC techniques product teams actually use, and for each one I tell you the signal it produces, the decision it unlocks, and the effort it costs to run. Two years running voice of customer loops at Feeqd for our own product and watching hundreds of customer teams run theirs has made one thing clear: the technique is not the point. The decision is.

Quick disambiguation before we start: if you searched "voice of customer techniques" looking for Six Sigma Voice of Customer Table (VoCT) or Critical to Quality (CTQ), this article covers the CX and product flavor of VoC. For the quality-engineering version, ASQ has the canonical reference.

Two VoC Frameworks (Quick Disambiguation)

The term "voice of the customer" means two different things to two different communities, and most articles conflate them. Worth separating before we go further.

CX / Product VoC (what this post covers)

The practice of capturing customer input across channels (surveys, widgets, boards, interviews, support tickets, reviews) and using it to drive product and experience decisions. Audience: product managers, CX leads, research teams. Output: roadmap items, experience improvements, prioritized backlogs.

Six Sigma VoC / VoCT / CTQ

A quality-engineering discipline where customer requirements feed into process improvement. VoC gets translated into a Voice of Customer Table (VoCT), then into Critical to Quality (CTQ) specifications that constrain manufacturing or service processes. Audience: quality engineers, operations, DMAIC practitioners.

| Dimension | CX / Product VoC | Six Sigma VoC |

|---|---|---|

| Audience | PMs, CX, research | Quality engineers, operations |

| Output | Roadmap items, UX fixes | CTQ specs, process controls |

| Typical tools | Widgets, boards, surveys, interviews | VoCT matrix, affinity diagrams, CTQ tree |

Both are valid. They just solve different problems. The rest of this post is the CX and product flavor, which is what the vast majority of readers actually want.

The 10 Voice of Customer Techniques Product Teams Actually Use

1. Public Feature Voting Boards

What it is: A public board where customers submit feature requests and vote on each other's entries. Ranking emerges from aggregate demand, not internal opinion.

Signal: Aggregate demand with segmentation (who voted, what tier).

Decision unlocked: Prioritization. Which 3 to 5 items to commit to next quarter's roadmap.

Effort: Low to Medium. Setup is a weekend. Ongoing curation is weekly.

Example: One integration request on our own Feeqd board collected 40 votes from paid users in six weeks. It went on the roadmap the next cycle. We wouldn't have caught that signal through a survey. More on the mechanics in feature voting boards and feature request tracking.

2. In-App Feedback Widgets

What it is: A lightweight widget embedded in the product that captures feedback without users leaving the page they're on.

Signal: Low-friction continuous input, heavy on bug reports and UX friction.

Decision unlocked: Surface friction fast. Catch the thing that just broke before the user churns silently.

Effort: Low. One embed snippet, minimal maintenance.

Example: Our widget is 18KB and loads before users notice it's there. That is why low-friction capture works. If submitting feedback costs 30 seconds, most users won't. The feedback widget guide goes deeper.

3. Targeted Surveys (NPS, CSAT, CES)

What it is: Structured surveys that quantify loyalty (NPS), satisfaction with a specific interaction (CSAT), or effort required to complete a task (CES).

Signal: Quantitative trend data across segments and cohorts.

Decision unlocked: Identify drift, commit to 1 or 2 bets. NPS drops of 8 points tell you something shifted; CSAT dips isolate the broken interaction.

Effort: Low once tooling is set up. Cap every survey at 5 questions, 3 minutes.

Example: Run the numbers with the NPS calculator, CSAT calculator, or CES calculator to get a sense of what your sample size produces before you launch.

4. Customer Interviews (1:1 Discovery)

What it is: 30 to 60 minute structured conversations with individual customers, typically run during discovery.

Signal: Qualitative depth. The "why" behind requests, the language customers use, the workarounds they've built.

Decision unlocked: Understand the problem behind the feature request. Inform early-stage discovery before engineering commits.

Effort: High. Recruiting is slow, interviews take an hour, analysis takes another hour.

Example: One interview revealed that three separate "export to CSV" requests were actually about moving data into a specific BI tool. Different problem, different solution.

5. Focus Groups and Async Video Feedback

What it is: Group discussions (live focus groups) or Loom-style customer-recorded videos for async input on concepts, mockups, or prototypes.

Signal: Group dynamics reveal disagreement; async video captures natural reactions without scheduling overhead.

Decision unlocked: Stress-test concepts early. Catch disagreement before you build.

Effort: Medium. Async video is cheaper than live focus groups, skip live groups unless you're testing social dynamics.

Example: A 10-minute Loom review from five beta users flagged a confusing onboarding step within 48 hours. That is faster and cheaper than scheduling five live interviews.

6. Social Listening and Review Mining

What it is: Monitoring social platforms, G2, Capterra, Reddit, and forums for unsolicited mentions of your product or category.

Signal: Public, unfiltered, biased toward complaints and delighted extremes.

Decision unlocked: Brand and objection data, not direct roadmap input. What prospects think before they ever talk to you.

Effort: Low with tooling, Medium without.

Example: A Reddit thread about your category can surface three objections worth putting into sales battle cards, none of which tend to show up in your own support inbox.

7. Support Ticket Mining

What it is: Tagging and aggregating patterns in your support inbox. Every ticket is implicit feedback.

Signal: Indirect qualitative plus volume patterns. Bug clusters, docs gaps, adjacent feature requests.

Decision unlocked: Bug fix prioritization, documentation gaps, and adjacent feature opportunities you wouldn't ask a survey about.

Effort: Low to Medium. Depends on your support tool's tagging.

Example: Tagging three months of tickets can reveal that a single CSV export bug is being reported weekly under different phrasings. Aggregating the duplicates raises its priority immediately.

8. Usability Testing and Session Replay

What it is: Watching users (live or recorded) interact with the product, either in moderated sessions or via session-replay tools.

Signal: Behavioral observation. What users actually do, not what they say.

Decision unlocked: Fix specific flow friction. The 3-click task that takes 8 clicks. The field nobody fills out.

Effort: Medium. Session replay is low setup, moderated usability tests are high.

Example: A session replay can show users scrolling past a primary CTA because the hero height pushed it below the fold. One CSS change recovers conversion without any new feature work.

9. Sales Call and Churn Interview Reviews

What it is: Systematic review of sales call recordings (deal-critical moments) and churn interviews (post-loss moments).

Signal: High-stakes qualitative. What actually blocked a deal or caused a cancellation.

Decision unlocked: Deal-critical features, churn drivers, objection handling.

Effort: Medium. Transcription tools help a lot; a PM dedicating 1 hour per week is enough for most teams.

Example: Three consecutive churn interviews citing the same missing integration are enough signal to move that integration from backlog to next quarter's commit.

10. Public Roadmaps as a Listening Surface

What it is: Counter-intuitive one. Publishing what you're planning, in progress, and shipped invites targeted feedback on priorities.

Signal: Reactions to your stated direction. Votes and comments on roadmap items carry different weight than raw feature requests.

Decision unlocked: Validate or re-order the roadmap. Spot the "wait, why isn't X on there?" pattern before you ship.

Effort: Low once you've got a roadmap tool. Update weekly. See public product roadmap for the full setup.

The Technique to Decision Matrix

This is the table I wish existed when I started running VoC. Every technique is plotted against the decision it unlocks.

| Technique | Signal type | Decision it unlocks | Cadence | Effort |

|---|---|---|---|---|

| Voting boards | Aggregate demand | Prioritization | Continuous | Low–Medium |

| In-app widget | Continuous qualitative | Surface friction fast | Continuous | Low |

| NPS / CSAT / CES | Quantitative trend | Commit to 1–2 bets | Monthly or quarterly | Low |

| Customer interviews | Qualitative depth | Inform discovery | Weekly or biweekly | High |

| Focus groups / async video | Qualitative group | Stress-test concepts | Per project | Medium |

| Social listening | Unsolicited public | Brand and objections | Continuous | Low–Medium |

| Support ticket mining | Indirect qualitative | Bugs, docs, adjacent gaps | Weekly | Low–Medium |

| Usability / session replay | Behavioral | Flow friction fixes | Per feature | Medium |

| Sales / churn interviews | High-stakes qualitative | Deal-critical features | Monthly | Medium |

| Public roadmap feedback | Reaction to direction | Validate or re-order roadmap | Continuous | Low |

Read the table like this: pick your decision first, then the technique. Not the other way around.

Techniques Most VoC Articles Skip

Every top VoC article I reviewed covered surveys, interviews, focus groups, social listening, and support tickets. Three modern techniques show up almost nowhere, and they are the ones product teams lean on hardest.

Public voting boards. Most enterprise CX articles skip these because voting boards are product-native, not a CX research tool. For product teams they are the single cleanest signal for prioritization. Fifty votes from paid users on the same request is more honest than any survey result. See types of customer feedback for where voting fits in the broader feedback taxonomy.

In-app widgets. Most CX pieces treat these as "surveys on a button." They're not. A good in-app widget captures context the user never has to type (page URL, user tier, action context) and the completion rate is 5 to 10 times higher than a linked Typeform. We built ours at 18KB specifically so it never gets blamed for page load.

Async video feedback. Newer technique (Loom-style customer-recorded video) that sits between an interview and a survey. Cheaper than a live interview, richer than a text response. Still under-used.

Two years running this at Feeqd, the combination I keep landing on is voting board plus in-app widget as the continuous surface, backed by monthly NPS/CSAT and quarterly interviews. Everything else is situational.

Common Mistakes With VoC Techniques

Using one technique for every decision. NPS tells you if loyalty is drifting. It does not tell you which feature to build next. Teams that run NPS quarterly and call it "VoC" are reading one instrument and pretending it's a dashboard.

Over-indexing on qualitative or quantitative. Qualitative without quantitative gives you vivid anecdotes that mislead. Quantitative without qualitative gives you numbers without reasons. You need both. Pair voting boards (aggregate) with interviews (depth).

Treating vocal minority as aggregate demand. The five customers who email you every week are not representative of your base. This is the mistake I made most often in year one of Feeqd: reacting to loudest voices instead of counting unique voters across segments. Fix is to segment your feedback by tier and account size before you aggregate, which is covered in more depth in voice of the customer best practices.

Running techniques without a closure mechanism. Every technique above collects signal. None of them close the loop on their own. If users don't hear back, the channel dies. The loop is the product. Full playbook in close the feedback loop.

FAQ

What is the Voice of the Customer method?

The Voice of the Customer (VoC) method is the structured practice of capturing customer input across multiple channels and using it to drive product or experience decisions. It combines quantitative techniques (NPS, CSAT, voting), qualitative techniques (interviews, support mining), and behavioral techniques (usability testing, session replay). For product teams specifically, VoC is continuous and tied directly to the roadmap, not a quarterly research project. Full definition in what is voice of the customer.

What is the difference between VoC and VOP?

VoC (Voice of the Customer) captures what customers want and expect from your product. VOP (Voice of the Process) captures what your process actually delivers, its capability and consistency. The gap between the two is the improvement opportunity in Six Sigma. Product teams rarely use VOP because most software product work is not about process control; the term is more common in manufacturing and service operations.

What are examples of Voice of the Customer?

Three concrete examples from running this at Feeqd: (1) a feature request on our public board collecting 40 votes from paid users over six weeks, which moved to the roadmap the next cycle; (2) an NPS survey dropping 8 points quarter-over-quarter among enterprise accounts, which surfaced an onboarding gap we wouldn't have caught otherwise; (3) a pattern of support tickets mentioning the same confusing error message, which turned into a bug fix and a copy rewrite. Each used a different technique, each unlocked a different decision.

Which voice of customer technique produces the best ROI for a small product team?

Voting board plus in-app widget combined. Reason: both are low-effort to run, both produce signal that is already in a form you can act on, and they complement each other (the widget catches friction the moment it happens; the board aggregates demand over time). Add a monthly NPS survey and quarterly interviews on top when you have the bandwidth. Skip focus groups until you're large enough to have concepts worth stress-testing.

Choosing the Right Voice of Customer Technique

Ten techniques, one question: which decision am I trying to unlock? Pick the technique that produces the signal shape for that decision, not the one your favorite blog recommended.

If you want the broader analytics layer these techniques feed into, see our pillar guide on voice of the customer analytics. For the operational sequence that ties techniques together into a running program, see the voice of the customer process. For the principles that make each technique produce decisions instead of noise, see voice of the customer best practices. And for the broader taxonomy of feedback signals each technique collects, see types of customer feedback.

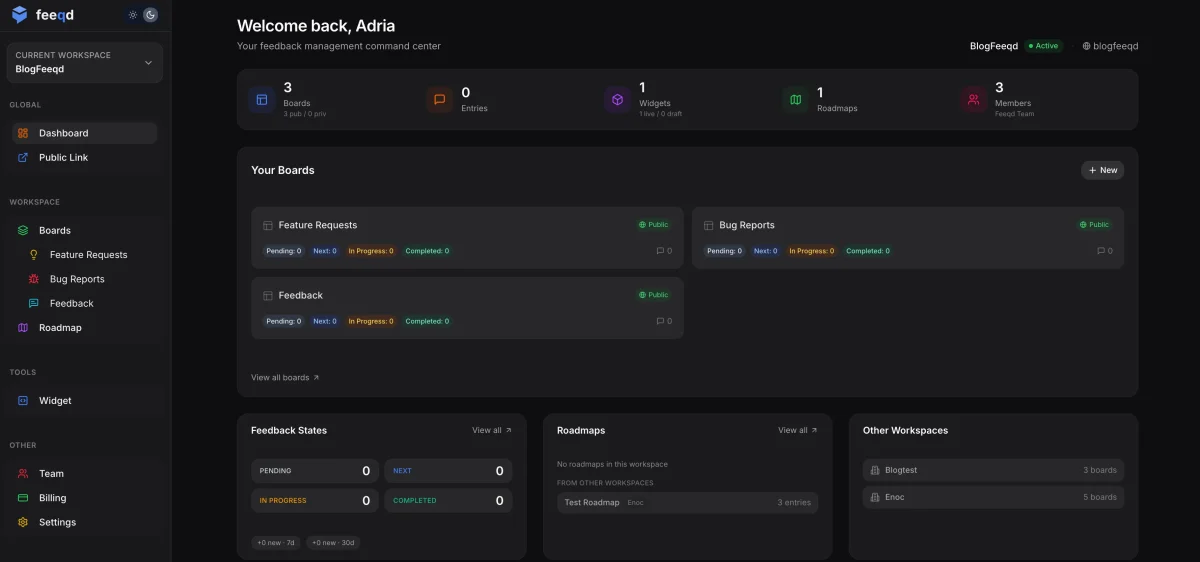

If you want a tool that bundles voting boards, in-app widgets, and public roadmaps (the three techniques product teams lean on hardest), that's what we built Feeqd for.

Get started with Feeqd for free

Let your users tell you exactly what to build next

Collect feedback, let users vote, and ship what actually matters. All in one simple tool that takes minutes to set up.

Sign up for free